Why Pre-Trained Models Matter for Machine Learning

Introduction

Imagine learning a new language from scratch. It takes months, maybe years, to master vocabulary, grammar, and pronunciation. But what if you had a shortcut? Suppose someone already fluent teaches you the key phrases and grammar rules so that you don’t have to start from zero. This is exactly how pre-trained models work in Machine Learning (ML)!

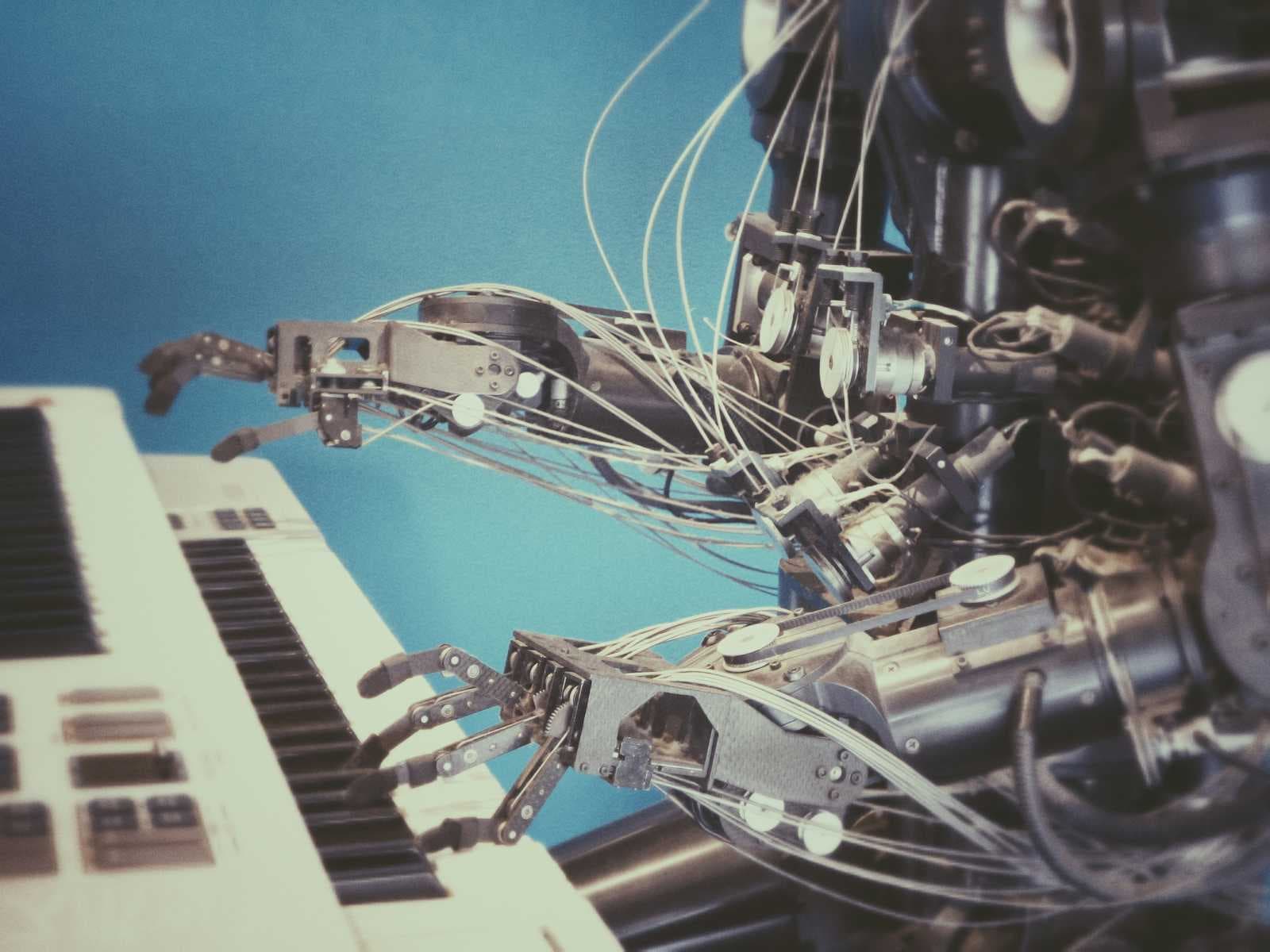

Instead of training AI from scratch, pre-trained models provide a head start by learning from massive datasets beforehand. They help AI systems perform tasks like image recognition, language processing, and even medical diagnosis much faster and more accurately than if they were trained from nothing.

Let’s break this down in simple terms before diving into the technical details.

Understanding Pre-Trained Models in Everyday Terms

Learning from Experience vs. Learning from Scratch

Think about how children learn math. A young child starts by understanding numbers, then learns basic addition, subtraction, multiplication, and so on. By the time they reach advanced topics like algebra, they already have a foundation to build upon.

Now, imagine two students:

Student A has to learn algebra from scratch without any prior knowledge.

Student B has already studied basic math and only needs to build on what they know.

Clearly, Student B will learn faster and perform better because they already have prior knowledge. Similarly, in machine learning, an AI model that has been pre-trained on large datasets can quickly adapt to new tasks without starting from zero.

📌 Pre-Trained Model: An AI model that has already been trained on a large dataset to recognize patterns and features, making it reusable for new tasks.

How Pre-Trained Models Work

Step 1: Initial Training on a Large Dataset

Before a pre-trained model can be used, it is trained on a massive dataset. For example:

A model trained on millions of images learns to recognize objects like cats, dogs, and trees.

A language model trained on billions of sentences understands grammar, context, and meaning.

📌 Dataset: A collection of structured or unstructured data used to train machine learning models.

Step 2: Fine-Tuning for a Specific Task

Once a model has been pre-trained, it can be fine-tuned to perform specialized tasks. This is much faster and requires less data compared to training from scratch.

For example:

A model trained on general images can be fine-tuned to detect medical conditions in X-rays.

A language model trained on general text can be fine-tuned for customer service chatbots.

📌 Fine-Tuning: The process of taking a pre-trained model and training it on a smaller, task-specific dataset to improve performance for a particular use case.

Why Do Pre-Trained Models Matter?

1. They Save Time and Resources

Training AI models from scratch requires huge amounts of data, time, and computing power. Pre-trained models help bypass this costly process by providing a foundation that only needs fine-tuning.

📌 Computational Cost: The processing power and time required to train a machine learning model.

2. They Improve Accuracy

Since pre-trained models have already learned useful patterns, they perform better than models trained on small datasets.

📌 Generalization: The ability of a model to apply learned knowledge to new, unseen data.

3. They Enable Transfer Learning

Pre-trained models make transfer learning possible—where knowledge gained from one task can be applied to another.

For example:

- A model trained to recognize cars in images can be reused to recognize bicycles with some fine-tuning.

📌 Transfer Learning: A technique in machine learning where a model trained on one task is adapted for a different but related task.

4. They Make AI More Accessible

Not everyone has the resources to train massive AI models. Pre-trained models allow developers and businesses to leverage advanced AI capabilities without needing expensive infrastructure.

📌 Machine Learning Framework: A set of tools and libraries (like TensorFlow or PyTorch) that help in building and training AI models.

Real-World Examples of Pre-Trained Models

GPT-4 (Language Model): Pre-trained on large-scale text data, it can be fine-tuned for chatbots, translation, and content generation.

ResNet (Image Recognition Model): Pre-trained on millions of images, used for tasks like medical imaging and facial recognition.

BERT (Natural Language Processing Model): Used for search engines and text classification tasks.

📌 Natural Language Processing (NLP): A branch of AI that enables machines to understand and interpret human language.

Conclusion

Pre-trained models are like students who already have a solid foundation of knowledge, allowing them to quickly learn new concepts. They save time, improve accuracy, and make AI more accessible for everyone.

Key Technical Terms Recap:

📌 Pre-Trained Model: An AI model already trained on a large dataset.

📌 Dataset: The structured or unstructured data used to train AI models.

📌 Fine-Tuning: Adapting a pre-trained model for a specific task.

📌 Computational Cost: The processing power required for AI training.

📌 Generalization: The ability to apply learned knowledge to new data.

📌 Transfer Learning: Using a pre-trained model for a different but related task.

📌 Machine Learning Framework: Tools for building and training AI models.

📌 Natural Language Processing (NLP): AI that understands human language.

🚀 Want to learn more about AI and ML? Follow me on Bits8Byte and share my articles with others!